NVIPM Validation Summary

The data presented above under the title “TTP” does not apply to NVIPM even though they ursurp the TTP name. None of the references [1-7,15,16] refer to NVIPM even though some peer reviewed journal articles would suggest otherwise [8-11]. The complete lack of anything to discuss here is the result of the Future Command unwillingness to publish what they have learned, that NVIPM is not an accurate predictor of anythng. All we can say is NVIPM is unvalidated, and that negative statement is “proved” by the lack of evidence in support of NVIPM.

The Optical Engineerng paper [9] requires some discussion, simply because the reviewers and editors let the authors claim things in the conclusion unsupported by anything in the paper.

First, the CTF experiment description clearly demonstrates the non-standard CTF used by NVIPM. The authors state:

“The CTF is measured at each spatial frequency, ξ, using a displayed sinusoidal stimulus pattern with an amplitude (Lp), a mean luminance (L0), and a stimulus size defined by the apparent target angle (w) equal to the square root of the target area in degrees.”

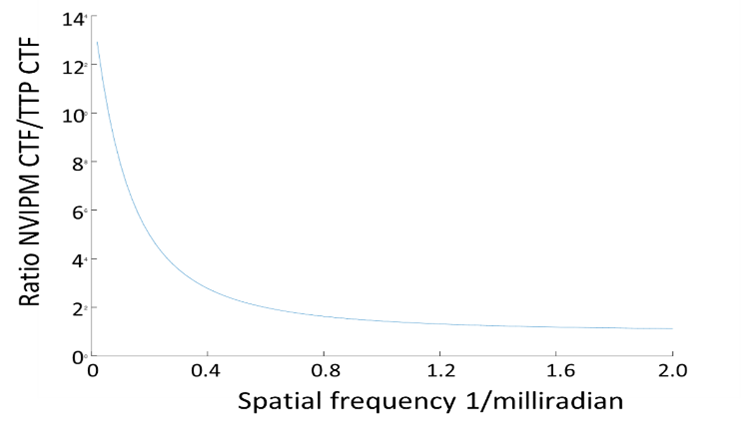

A one meter target at 1 kilometer viewed through a 10X spotting scope would subtend 0.57 degrees. The figure shows the ratio of NVIPM CTF to TTP CTF (TTP CTF is used by the vision community). Note that at low spatial frequencies there are few sine wave periods in 0.57 degrees and threshold are an order of magnitude high. The threshold of NVIPM CTF decreases as spatial freqquency increases because there are more cycles to look at.

The point of the ratio of CTF plot is that metric calculation is very different for NVIPM than TTP. The larger the target is on the display, the closer the NVIPM calculation is to the TTP calculation.

The authors published the results of three of eightyone TTP experiments. They have the PID data on all eightyone but have published the results for three. Why not credit at least those three experiments as validation even though the errors are larger than the TTP predictions? Because all three presented the targets with a four degree target angle. Those three experiments presented the targets large enough on the display that the NVIPM CTF approaches the standard CTF. That is, the current Army modelers have published only those NVIPM results where the model approaches the TTP model. That selection of experiments makes our point, not theirs.

There is an unsupported statement in [9] that the original TTP has trouble with adjusting to different target angles. Totally untrue. There are all kinds of target angles considering not just [4] experiments but [4-7], [15,16], and the field test results over range. Again, the current Army modelers make simple, declarative statements unsupported by any discussion or examples. There are many other such statements in [9] and various conference articles over the years. Simple, unsupported statements, all of which ignore TTP validation literture.

The authors of [9] describe a two-hand-hand-held PID experiment where they use the PID data to calibrate a new empirical constant. The authors admit in the body of the paper that there are unresolved issues with the noise model, but the point here is that no validation data was presented. In the [9] conclusion, once again, simple declarative statements about how validated their model is.

The NVIPM CTFsys model is complete nonsense and, of course, no data is or can be presented in support of that model. The Future Command has published no validation of the NVIPM predictions, although Optical Engineering editorial staff and reviewers have let them make unsuppported statements in the [9] conclusions. Read the paper, it is not hard, there is nothing there, and the confusing part is that the authors actually admit to serious, unsolved problems with the NVIPM model, but the reviewers still let the future Command modelers claim success in the conclusion section.

Conclusions

There is a long and successful history of TTP validation including predicting the CTF in noise data of independent researchers, predicting PID of target sets of tactical vehicles, faces, characters, and shapes. The fact that the erf function relates metric value to PID is an indicator of model viability as is predicting the assessment of aviators about the performance of pilotage aids.

NVIPM does not use the TTP metric. We understand that the Army calls the metric in NVIPM “TTP.” Doing otherwise would separate NVIPM for any semblance of legitimacy.

The Army has never explained the transition of their model away from TTP. They have published no supporting data, no theory, and no explanation. At this point, it appears there is no stopping the dishonest destruction of valid research by a disinterested and lazy Army bureaucracy.

Certainly Future Command management (sic) will do nothing, and clearly the current Army modelers are getting their way with everyone in a position to do anything. Why is that? It appears that no one really cares about these models or understands their value, but what caliber of techncial community knowingly lets good work languish and sloppy work thrive?